How to Use FastBCP with Kestra

Integrating FastBCP into Kestra allows you to build robust, automated data export workflows that leverage parallel processing while maintaining full observability and orchestration control.

In this integration guide, we'll show you how to run FastBCP export tasks in Kestra, configure secure credential management, and monitor execution results for exports to cloud storage.

Why Use FastBCP with Kestra?

Kestra is a modern, open-source orchestration platform designed for data workflows. Combining it with FastBCP gives you:

- Declarative workflows: Define complex data export pipelines in simple YAML

- Parallel execution: Leverage FastBCP's multi-threaded exports within orchestrated flows

- Logging and monitoring: Capture execution details, export statistics, and errors in Kestra's UI

- Scheduling: Run data exports on cron schedules or trigger them via API

- Secure credential management: Use Kestra secrets for database passwords and cloud credentials

- Multi-format support: Export to Parquet, CSV, JSON, and more

- Cloud integration: Export directly to S3, Azure Blob Storage, or local file systems

Prerequisites

Before starting, ensure you have:

- Kestra installed and running (Docker Compose, Kubernetes, or standalone)

- Docker available to Kestra (for running FastBCP containers)

- A valid FastBCP license (request a trial license)

- Source database accessible from your Kestra environment

- AWS credentials configured (if exporting to S3)

For local testing, Kestra can be quickly started with Docker Compose. See the Kestra quickstart guide.

What is Kestra?

Kestra is an open-source orchestration and scheduling platform built for data engineering teams. It provides:

- YAML-based workflow definitions: Declare your entire pipeline in code

- Rich plugin ecosystem: Native integrations with databases, cloud services, containers

- Built-in monitoring: Track executions, logs, and metrics in a unified UI

- API-first design: Trigger and manage workflows programmatically

- Secret management: Securely store and retrieve credentials

Docs: https://kestra.io/docs

Setting Up FastBCP in Kestra

Kestra can run FastBCP via the Docker task plugin, which executes containerized commands and captures their output.

1. Configure Secrets in Kestra

First, add your credentials as Kestra secrets. Navigate to Tenant > Secrets and add:

FASTBCP_MSSQL_USER: SQL Server usernameFASTBCP_MSSQL_PASSWORD: SQL Server passwordFASTBCP_AWS_ACCESS_KEY_ID: AWS access keyFASTBCP_AWS_SECRET_ACCESS_KEY: AWS secret keyFASTBCP_AWS_DEFAULT_REGION: AWS region (e.g.,us-east-1)

Kestra secrets are encrypted at rest and never logged in plain text, ensuring secure credential management.

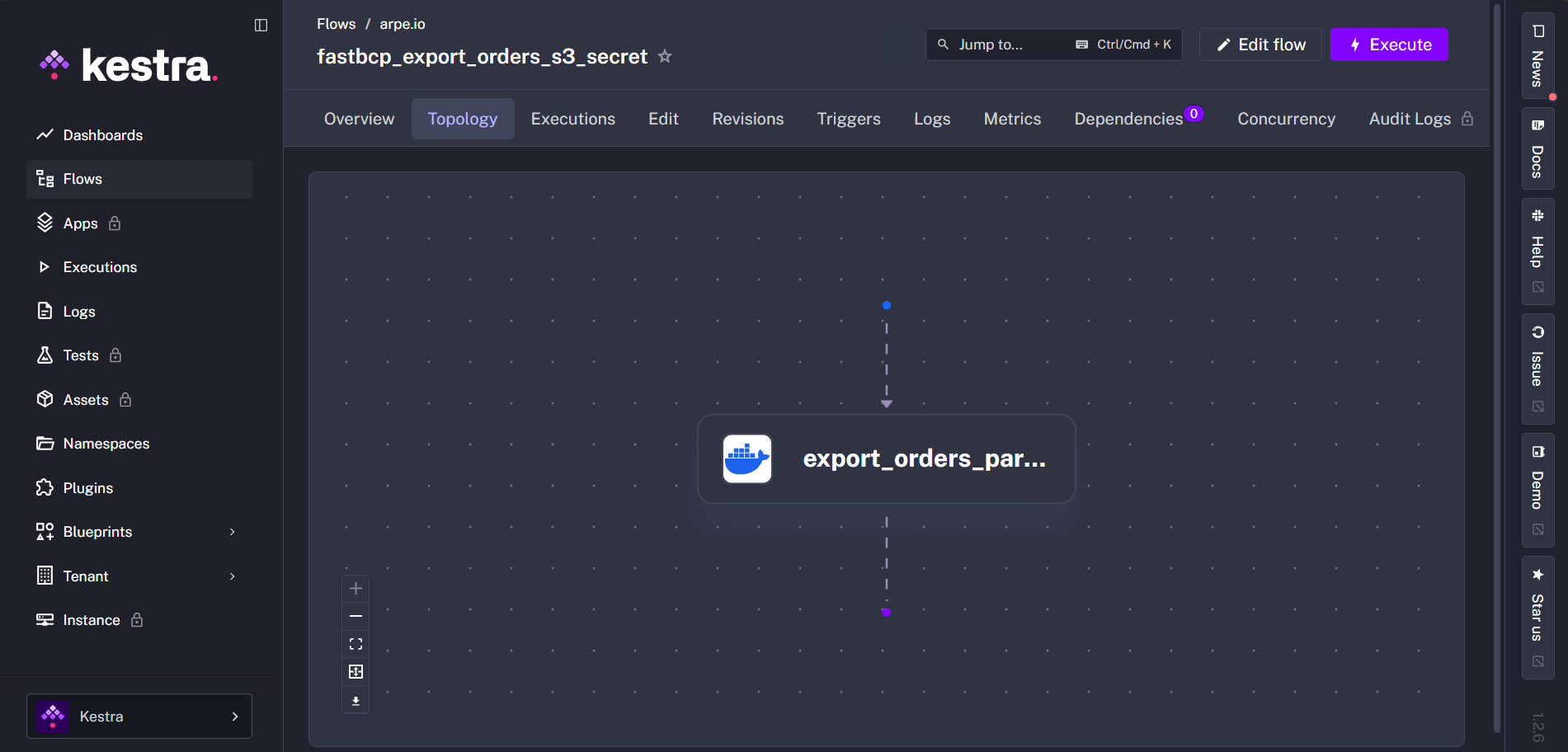

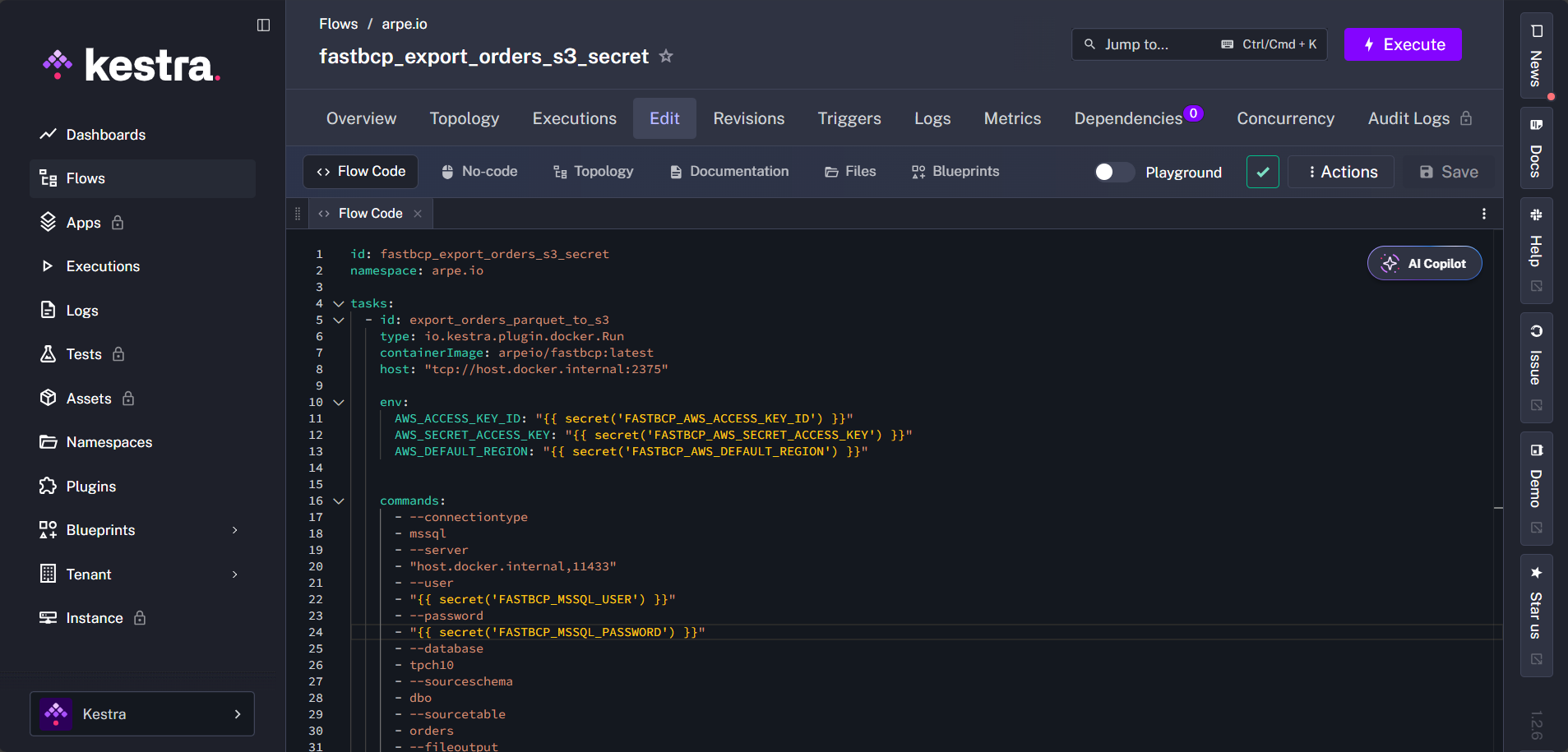

2. Create a Kestra Flow

Here's a complete example of a Kestra flow that exports data from SQL Server to S3 in Parquet format using FastBCP.

id: fastbcp_export_orders_s3_secret

namespace: arpe.io

description: Export orders table from SQL Server to S3 in Parquet format using FastBCP with parallel processing

tasks:

- id: export_orders_parquet_to_s3

type: io.kestra.plugin.docker.Run

containerImage: arpeio/fastbcp:latest

host: "tcp://host.docker.internal:2375"

env:

AWS_ACCESS_KEY_ID: "{{ secret('FASTBCP_AWS_ACCESS_KEY_ID') }}"

AWS_SECRET_ACCESS_KEY: "{{ secret('FASTBCP_AWS_SECRET_ACCESS_KEY') }}"

AWS_DEFAULT_REGION: "{{ secret('FASTBCP_AWS_DEFAULT_REGION') }}"

commands:

- --connectiontype

- mssql

- --server

- "host.docker.internal,11433"

- --user

- "{{ secret('FASTBCP_MSSQL_USER') }}"

- --password

- "{{ secret('FASTBCP_MSSQL_PASSWORD') }}"

- --database

- tpch10

- --sourceschema

- dbo

- --sourcetable

- orders

- --fileoutput

- orders.parquet

- --directory

- s3://fastbcp-export/orders/parquet

- --parallelmethod

- Ntile

- --paralleldegree

- "12"

- --distributekeycolumn

- o_orderkey

- --merge

- "false"

- --nobanner

Key Points

io.kestra.plugin.docker.Run: Kestra's Docker task that runs containerized commandsenvsection: AWS credentials are passed as environment variables using Kestra secrets{{ secret('...') }}: Securely retrieves credentials from Kestra secrets--parallelmethod Ntile: Parallel distribution method using the Ntile algorithm--paralleldegree "12": Configures FastBCP to use 12 parallel workers--distributekeycolumn o_orderkey: Column used to distribute data across parallel workers--merge "false": Each parallel worker creates a separate file--directory s3://...: Direct export to S3 bucket--fileoutput orders.parquet: Output format (Parquet)--nobanner: Suppresses the FastBCP banner for cleaner logs

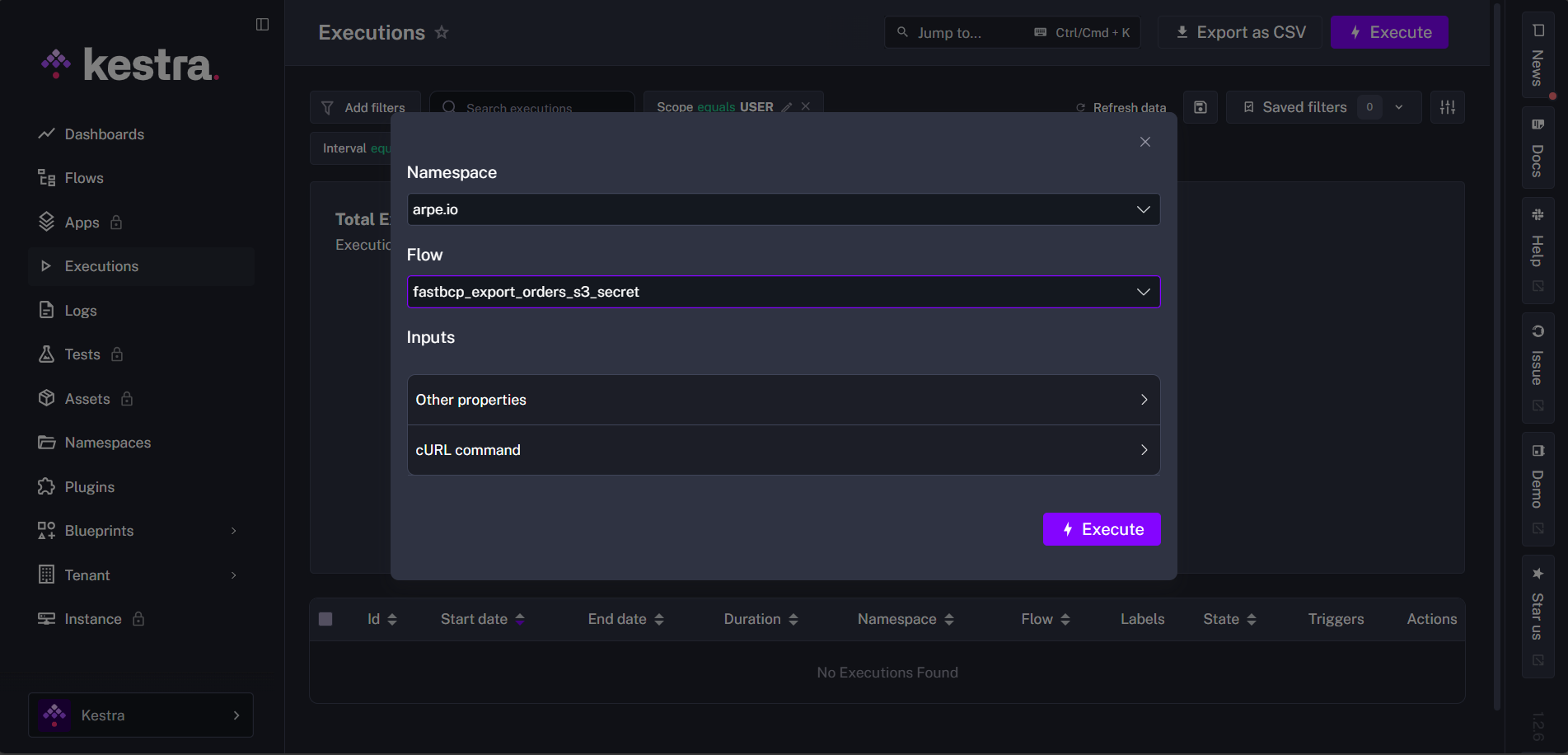

Running the Flow

1. Execute the Flow from Kestra UI

Navigate to your flow in the Kestra UI and click Execute.

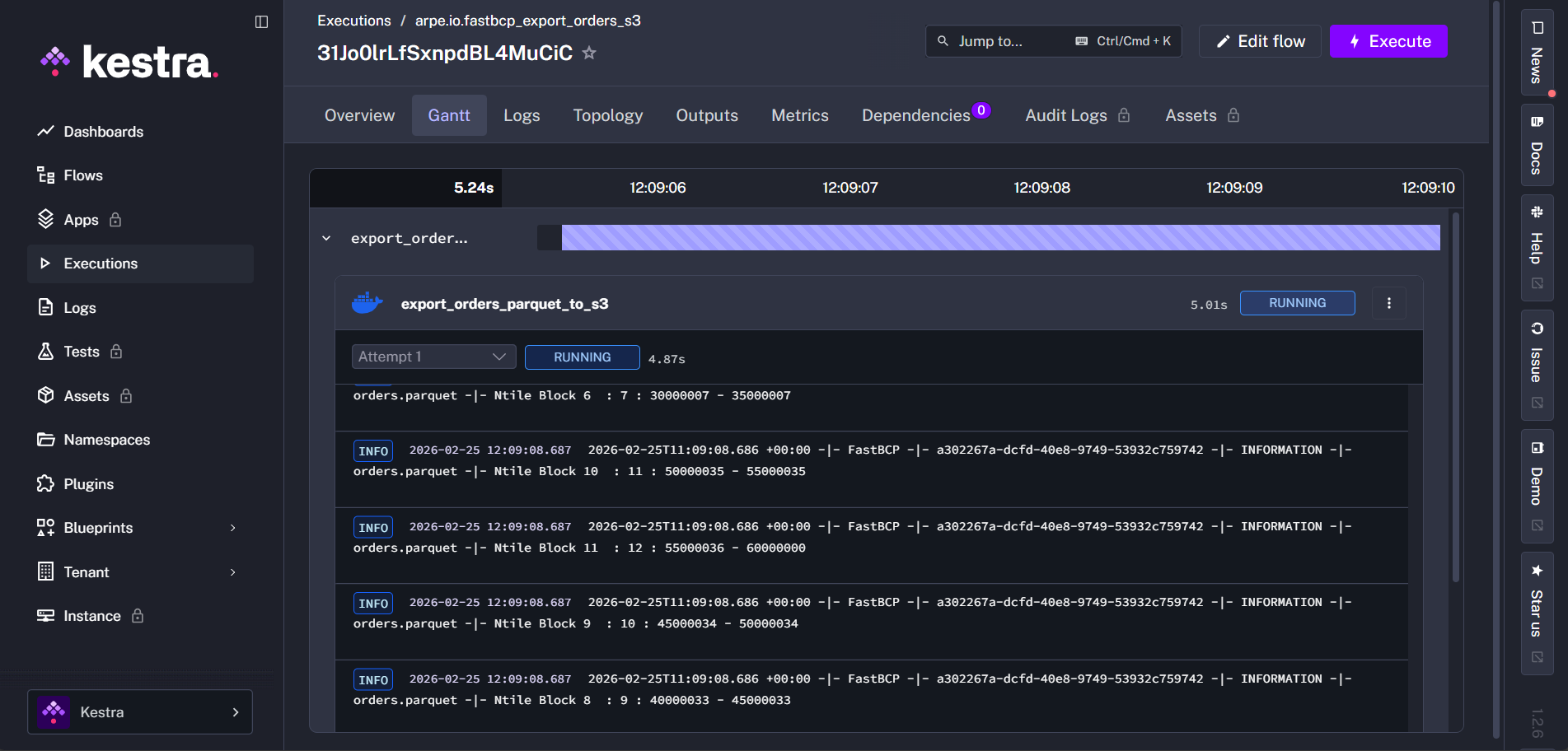

2. Monitor Execution

Kestra displays real-time logs as FastBCP runs:

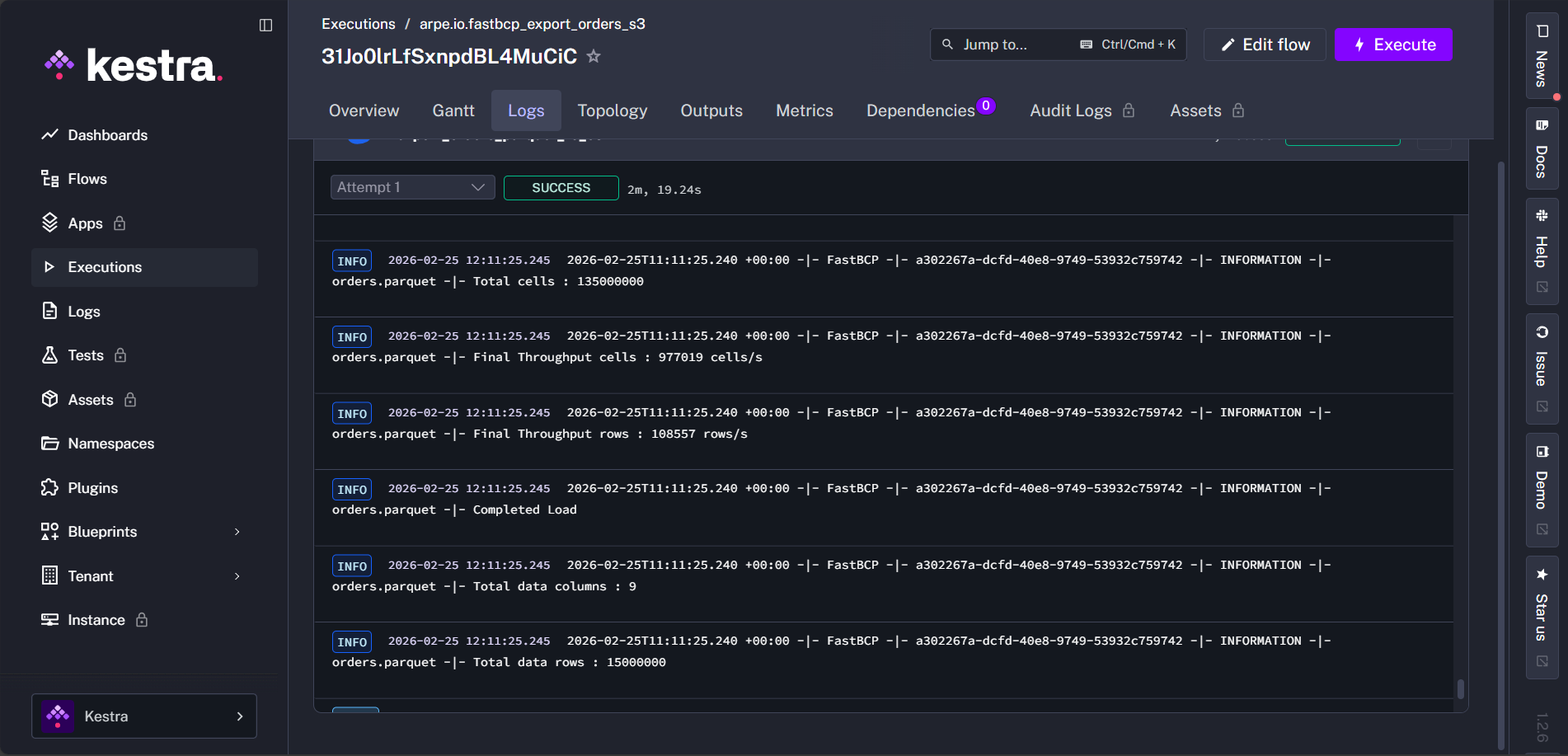

3. View Export Summary in Logs

Once the export completes successfully, FastBCP outputs a detailed summary at the end of the execution logs. You can view key metrics directly in Kestra's log output:

- Total rows exported

- Elapsed time

- Throughput (rows/second)

- Success status

- Any errors or warnings

Example Use Cases

Scheduled Daily Exports

Add a trigger to run the export daily at 2 AM:

triggers:

- id: daily_export_schedule

type: io.kestra.plugin.core.trigger.Schedule

cron: "0 2 * * *"

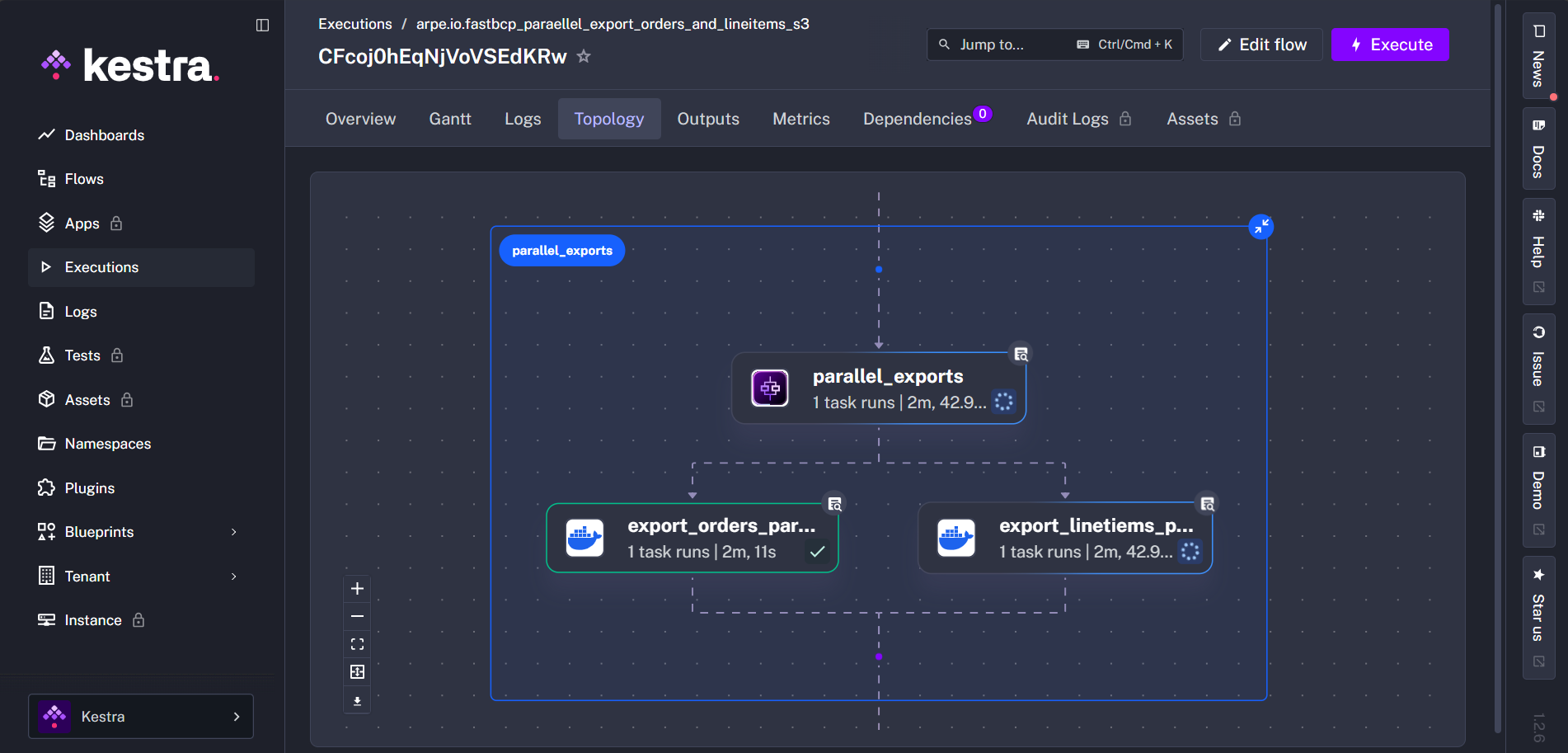

Parallel Multi-Table Exports

Use Kestra's parallel task execution to export multiple tables simultaneously:

id: fastbcp_paraellel_export_orders_and_lineitems_s3

namespace: arpe.io

tasks:

- id: parallel_exports

type: io.kestra.plugin.core.flow.Parallel

tasks:

- id: export_orders_parquet_to_s3

type: io.kestra.plugin.docker.Run

containerImage: arpeio/fastbcp:latest

host: "tcp://host.docker.internal:2375"

env:

AWS_ACCESS_KEY_ID: "{{ secret('FASTBCP_AWS_ACCESS_KEY_ID') }}"

AWS_SECRET_ACCESS_KEY: "{{ secret('FASTBCP_AWS_SECRET_ACCESS_KEY') }}"

AWS_DEFAULT_REGION: "{{ secret('FASTBCP_AWS_DEFAULT_REGION') }}"

commands:

- --connectiontype

- mssql

- --server

- "host.docker.internal,11433"

- --user

- "{{ secret('FASTBCP_MSSQL_USER') }}"

- --password

- "{{ secret('FASTBCP_MSSQL_PASSWORD') }}"

- --database

- tpch10

- --sourceschema

- dbo

- --sourcetable

- orders

- --fileoutput

- orders.parquet

- --directory

- s3://fastbcp-export/orders/parquet

- --parallelmethod

- Ntile

- --paralleldegree

- "12"

- --distributekeycolumn

- o_orderkey

- --merge

- "false"

- --nobanner

- id: export_linetiems_parquet_to_s3

type: io.kestra.plugin.docker.Run

containerImage: arpeio/fastbcp:latest

host: "tcp://host.docker.internal:2375"

env:

AWS_ACCESS_KEY_ID: "{{ secret('FASTBCP_AWS_ACCESS_KEY_ID') }}"

AWS_SECRET_ACCESS_KEY: "{{ secret('FASTBCP_AWS_SECRET_ACCESS_KEY') }}"

AWS_DEFAULT_REGION: "{{ secret('FASTBCP_AWS_DEFAULT_REGION') }}"

commands:

- --connectiontype

- mssql

- --server

- "host.docker.internal,11433"

- --user

- "{{ secret('FASTBCP_MSSQL_USER') }}"

- --password

- "{{ secret('FASTBCP_MSSQL_PASSWORD') }}"

- --database

- tpch10

- --sourceschema

- dbo

- --sourcetable

- lineitem

- --fileoutput

- lineitem.parquet

- --directory

- s3://fastbcp-export/lineitem/parquet

- --parallelmethod

- Ntile

- --paralleldegree

- "12"

- --distributekeycolumn

- l_orderkey

- --merge

- "false"

- --nobanner

Conclusion

Integrating FastBCP with Kestra gives you a powerful combination: Kestra's orchestration and monitoring capabilities paired with FastBCP's high-performance parallel data exports.

Whether you're scheduling daily exports to data lakes, orchestrating complex multi-table extractions, or building event-driven data pipelines to cloud storage, this integration provides the flexibility and performance modern data teams need.

Want to try it on your own data? Download FastBCP and get a free 30-day trial.

Resources

- FastBCP: https://fastbcp.arpe.io

- FastBCP Docker Image: https://hub.docker.com/repository/docker/arpeio/fastbcp

- FastBCP Documentation: https://fastbcp-docs.arpe.io/latest/

- FastBCP Docker Integration: https://fastbcp-docs.arpe.io/latest/integration/docker-integration

- Kestra Documentation: https://kestra.io/docs

- Kestra Docker Plugin: https://kestra.io/plugins/plugin-docker

- Kestra Secrets Management: https://kestra.io/docs/concepts/secret