How to Use FastTransfer with Apache Airflow

Integrating FastTransfer into Apache Airflow allows you to build robust, production-grade data transfer workflows that leverage parallel processing while maintaining full observability and orchestration control.

In this integration guide, we'll show you how to run FastTransfer tasks in Airflow using the Docker operator, configure secure connection management, and monitor execution results.

Why Use FastTransfer with Apache Airflow?

Apache Airflow is the industry-standard platform for authoring, scheduling, and monitoring data pipelines. Combining it with FastTransfer gives you:

- Production-ready orchestration: Build complex data pipelines with dependencies, retries, and error handling

- Parallel execution: Leverage FastTransfer's multi-threaded transfers within orchestrated DAGs

- Secure credential management: Store database credentials centrally using Airflow connections

- Monitoring and alerting: Track execution details, transfer statistics, and performance metrics in Airflow's UI

- Scheduling flexibility: Run data transfers on cron schedules, event triggers, or via API

- Multi-database support: Transfer data between SQL Server, PostgreSQL, Oracle, MySQL, and more

Prerequisites

Before starting, ensure you have:

- Apache Airflow installed and running (standalone, Docker Compose, or Kubernetes)

- Docker available to Airflow (for running FastTransfer containers)

- apache-airflow-providers-docker package installed

- A valid FastTransfer license (request a trial license)

- Source and target databases accessible from your Airflow environment

For local testing, Airflow can be quickly started with Docker Compose or using airflow standalone. See the Airflow quickstart guide.

What is Apache Airflow?

Apache Airflow is a powerful open-source platform for developing, scheduling, and monitoring batch-oriented workflows. It provides:

- Python-based DAGs: Define your entire pipeline as code using Python

- Rich operator ecosystem: Native integrations with databases, cloud services, containers, and more

- Built-in monitoring: Track executions, logs, and metrics in a unified web UI

- Scalability: Run on a single machine or scale to thousands of workers

- Active community: Extensive documentation and a thriving ecosystem of plugins

Docs: https://airflow.apache.org/docs/

Configure Airflow Connections

Airflow connections allow you to securely store and manage credentials for external systems. FastTransfer integration requires connections for your source and target databases.

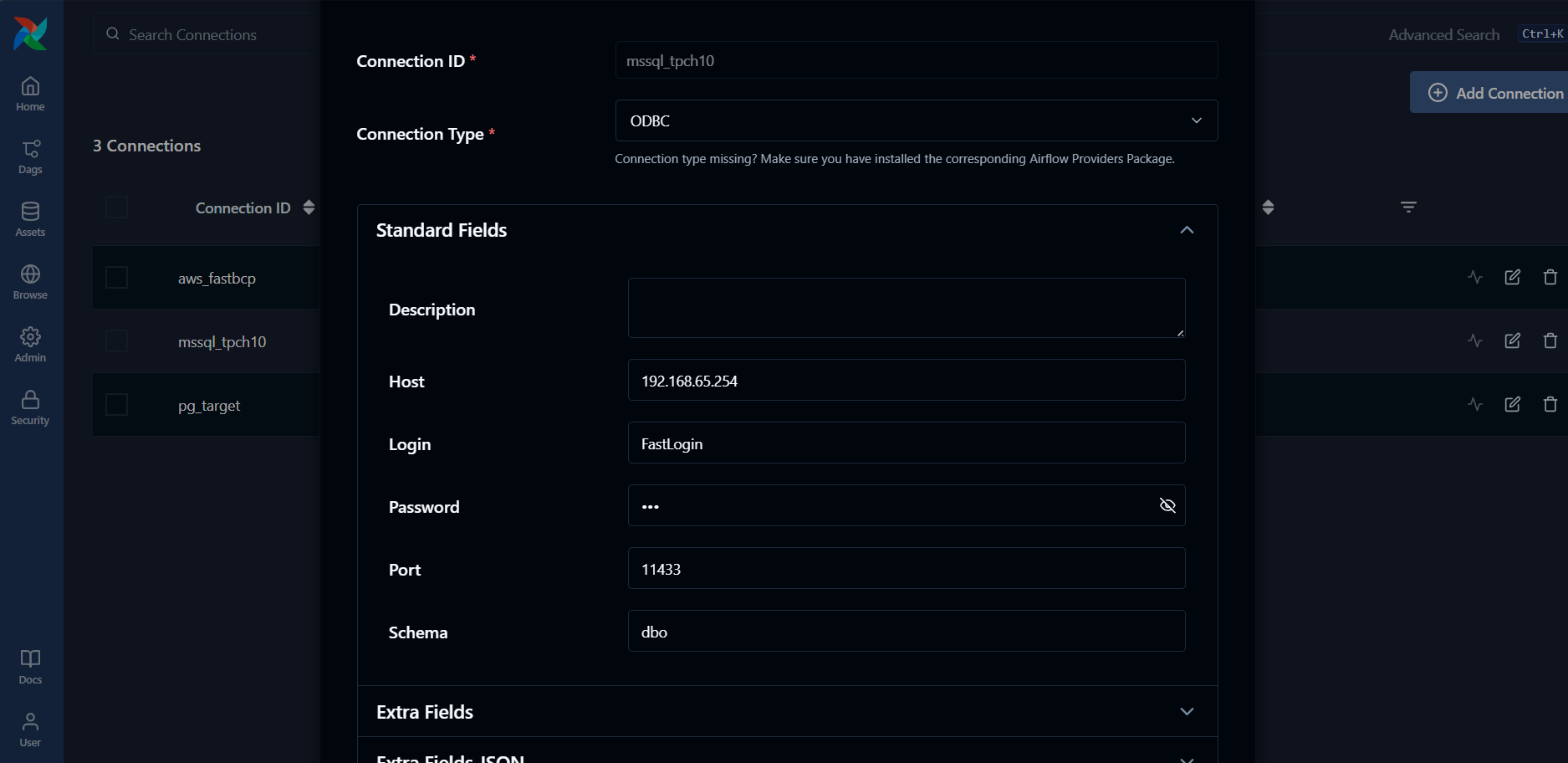

A) Source Database Connection (MSSQL Example)

Create an MSSQL connection for your source database:

- Navigate to Admin → Connections in the Airflow UI

- Click + to create a new connection

- In the Connection Type dropdown, select ODBC

- Configure the connection:

Connection Settings:

- Connection Id:

mssql_tpch10(or your preferred name) - Connection Type:

ODBC(select this from the dropdown) - Host:

192.168.65.254(your database host) - Port:

11433(your database port) - Login: Your database username (e.g.,

FastLogin) - Password: Your database password

- Extra:

{"database":"tpch10"}

For ODBC connections, the database name should be specified in the Extra JSON field using {"database":"your_database_name"}.

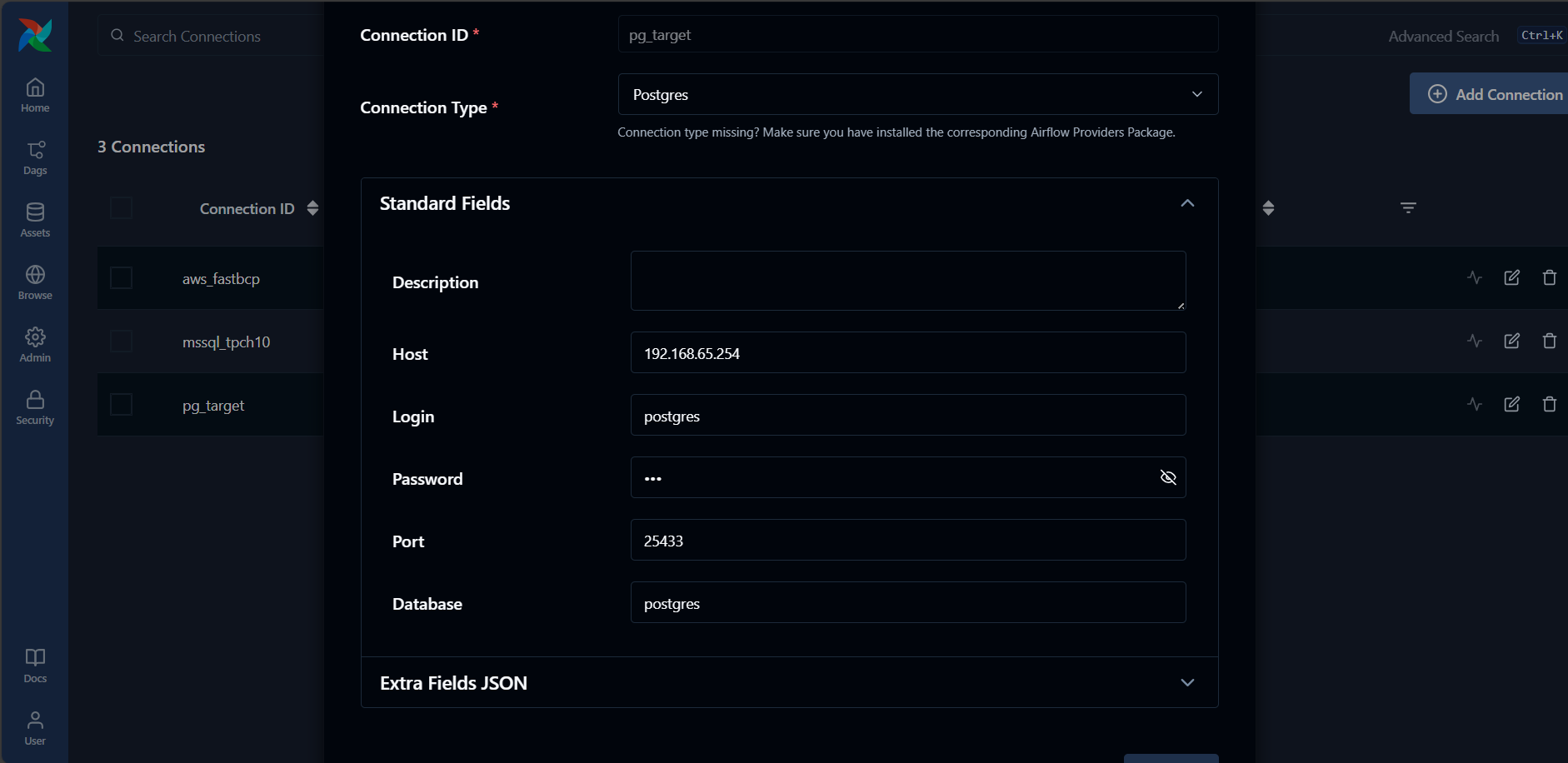

B) Target Database Connection (PostgreSQL Example)

Create a PostgreSQL connection for your target database:

- Navigate to Admin → Connections in the Airflow UI

- Click + to create a new connection

- In the Connection Type dropdown, select Postgres

- Configure the connection:

Connection Settings:

- Connection Id:

pg_target(or your preferred name) - Connection Type:

Postgres(select this from the dropdown) - Host:

192.168.65.254(your database host) - Port:

25433(your database port) - Schema:

postgres(this is the database name) - Login:

postgres(your database username) - Password: Your database password

For Postgres connections, the database name is specified in the Schema field.

Creating a FastTransfer DAG

Complete DAG Example

Create a DAG file (e.g., fasttransfer_orders_mssql_to_pg.py) in your Airflow DAGs folder:

from datetime import datetime

from airflow import DAG

from airflow.sdk.bases.hook import BaseHook

from airflow.providers.docker.operators.docker import DockerOperator

def _conn_parts(conn_id: str):

"""

Extract connection details from Airflow connection.

Works with:

- ODBC connections (for MSSQL): database in Extra JSON {"database":"name"}

- Postgres connections: database in Schema field

Returns:

tuple: (host, port, user, password, database)

"""

c = BaseHook.get_connection(conn_id)

host = c.host

port = c.port

user = c.login

pwd = c.password

# Database in Extra {"database": "..."} (for ODBC MSSQL) or in Schema (for Postgres)

db = c.extra_dejson.get("database") or c.schema

if not db:

raise ValueError(

f"Connection '{conn_id}': database not found. Add it to Extra JSON "

f'{{"database":"..."}} (for ODBC) or Schema field (for Postgres).'

)

return host, port, user, pwd, db

with DAG(

dag_id="fasttransfer_orders_mssql_to_pg",

start_date=datetime(2025, 1, 1),

schedule=None,

catchup=False,

tags=["fasttransfer", "mssql", "postgres", "docker"],

) as dag:

# Extract connection details

mssql_host, mssql_port, mssql_user, mssql_pwd, mssql_db = _conn_parts("mssql_tpch10")

pg_host, pg_port, pg_user, pg_pwd, pg_db = _conn_parts("pg_target")

# FastTransfer Docker task

transfer_task = DockerOperator(

task_id="transfer_orders",

image="arpeio/fasttransfer:latest",

docker_url="tcp://host.docker.internal:2375",

api_version="auto",

auto_remove="success",

mount_tmp_dir=False, # Avoids the remote-engine tmp mount warning

do_xcom_push=False,

command=[

"--sourceconnectiontype", "mssql",

"--sourceserver", f"{mssql_host},{mssql_port}",

"--sourcedatabase", mssql_db,

"--sourceuser", mssql_user,

"--sourcepassword", mssql_pwd,

"--sourceschema", "dbo",

"--sourcetable", "orders",

"--targetconnectiontype", "pgcopy",

"--targetserver", f"{pg_host}:{pg_port}",

"--targetdatabase", pg_db,

"--targetuser", pg_user,

"--targetpassword", pg_pwd,

"--targetschema", "public",

"--targettable", "orders",

"--loadmode", "Truncate",

"--mapmethod", "Name",

"--method", "Ntile",

"--degree", "12",

"--distributekeycolumn", "o_orderkey",

"--nobanner",

],

network_mode="bridge",

)

Key Parameters Explained

image: Specifies the FastTransfer Docker image (arpeio/fasttransfer:latest)command: List of FastTransfer CLI argumentsauto_remove: Automatically remove the container after successful executionmount_tmp_dir: Set toFalseto avoid warnings with remote Docker daemons

Helper Function: _conn_parts()

This utility function extracts connection details from an Airflow connection:

- Retrieves host, port, username, and password from the connection

- Extracts the database name from either the Extra JSON field (for ODBC connections) or the Schema field (for Postgres connections)

- Returns all components needed to build the FastTransfer connection parameters

- Validates that all required fields are present

This approach ensures that credentials are managed centrally in Airflow and never hardcoded in DAG files.

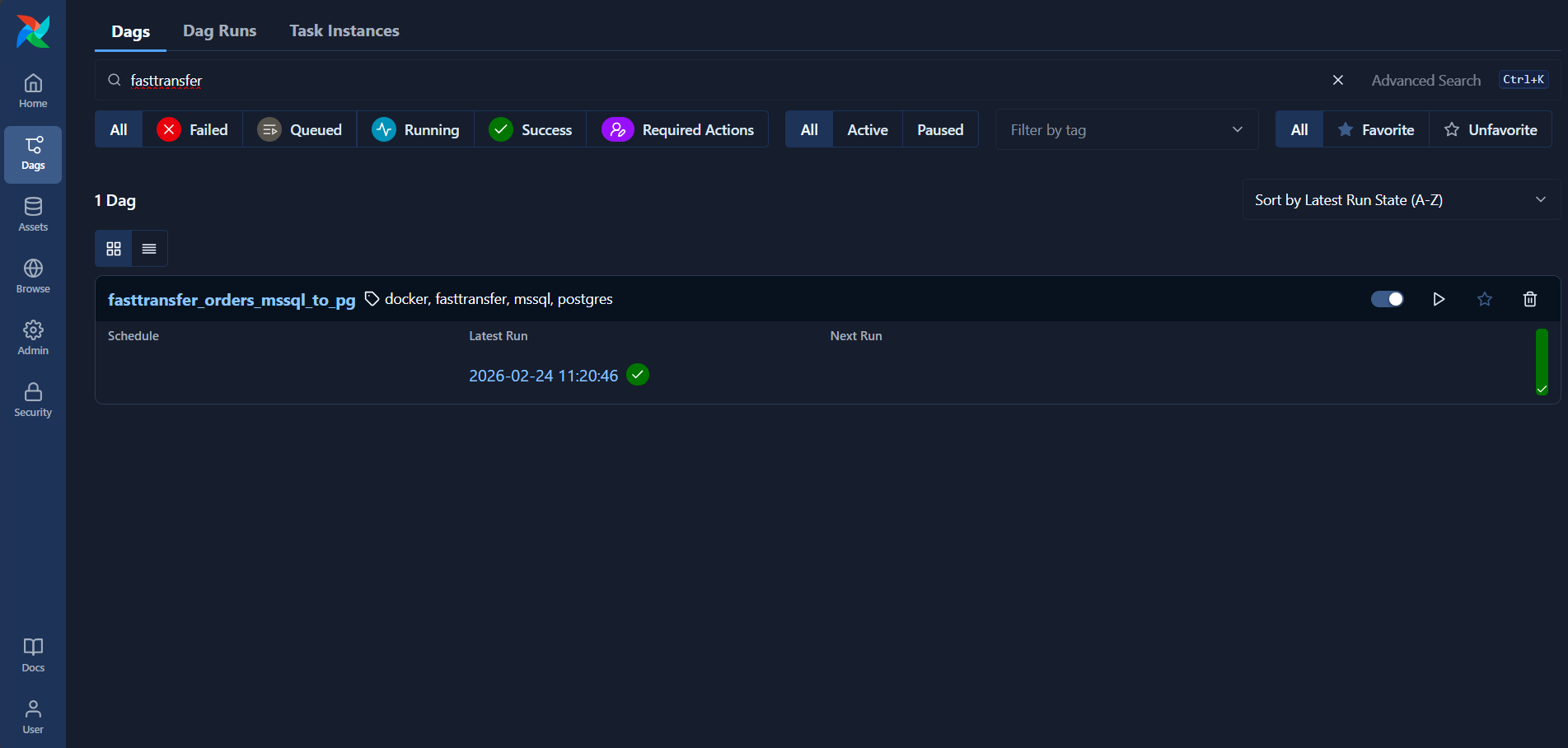

Running and Monitoring the DAG

1. Verify the DAG in Airflow UI

After placing your DAG file in the DAGs folder, it should appear in the Airflow UI:

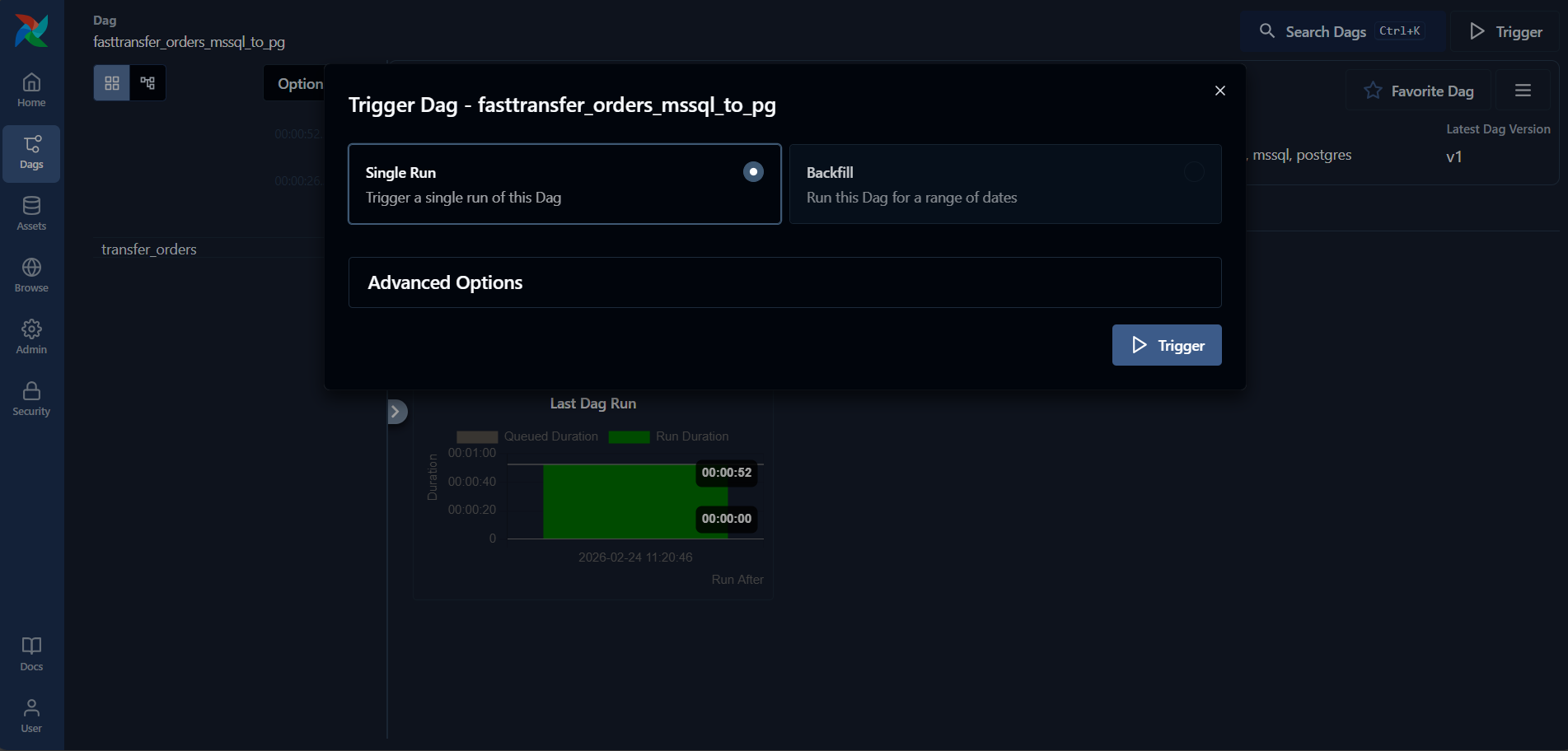

2. Trigger the DAG

Click on the DAG and then click the Trigger DAG button (play icon) to start execution:

3. Monitor Execution Progress

The Grid view shows the execution status in real-time:

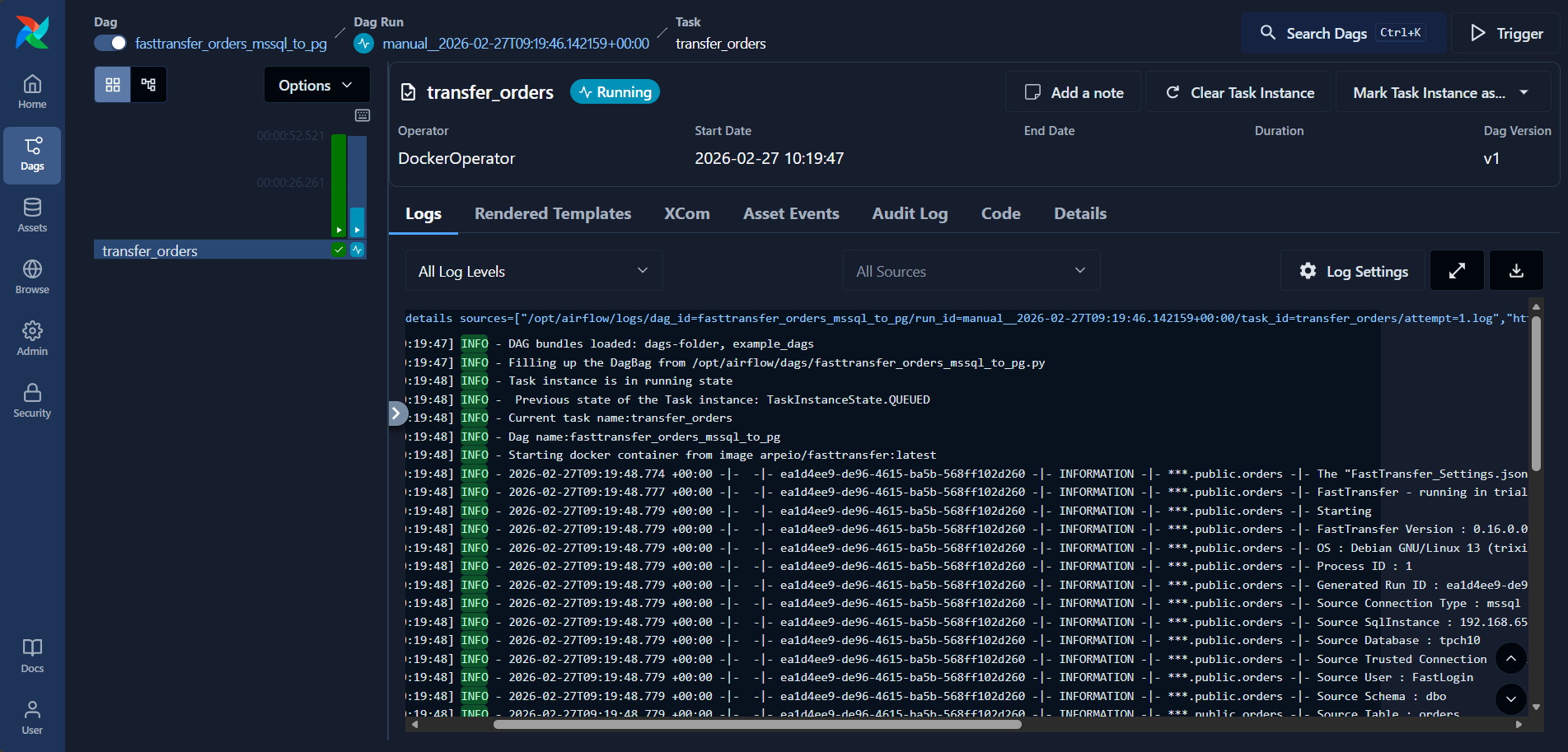

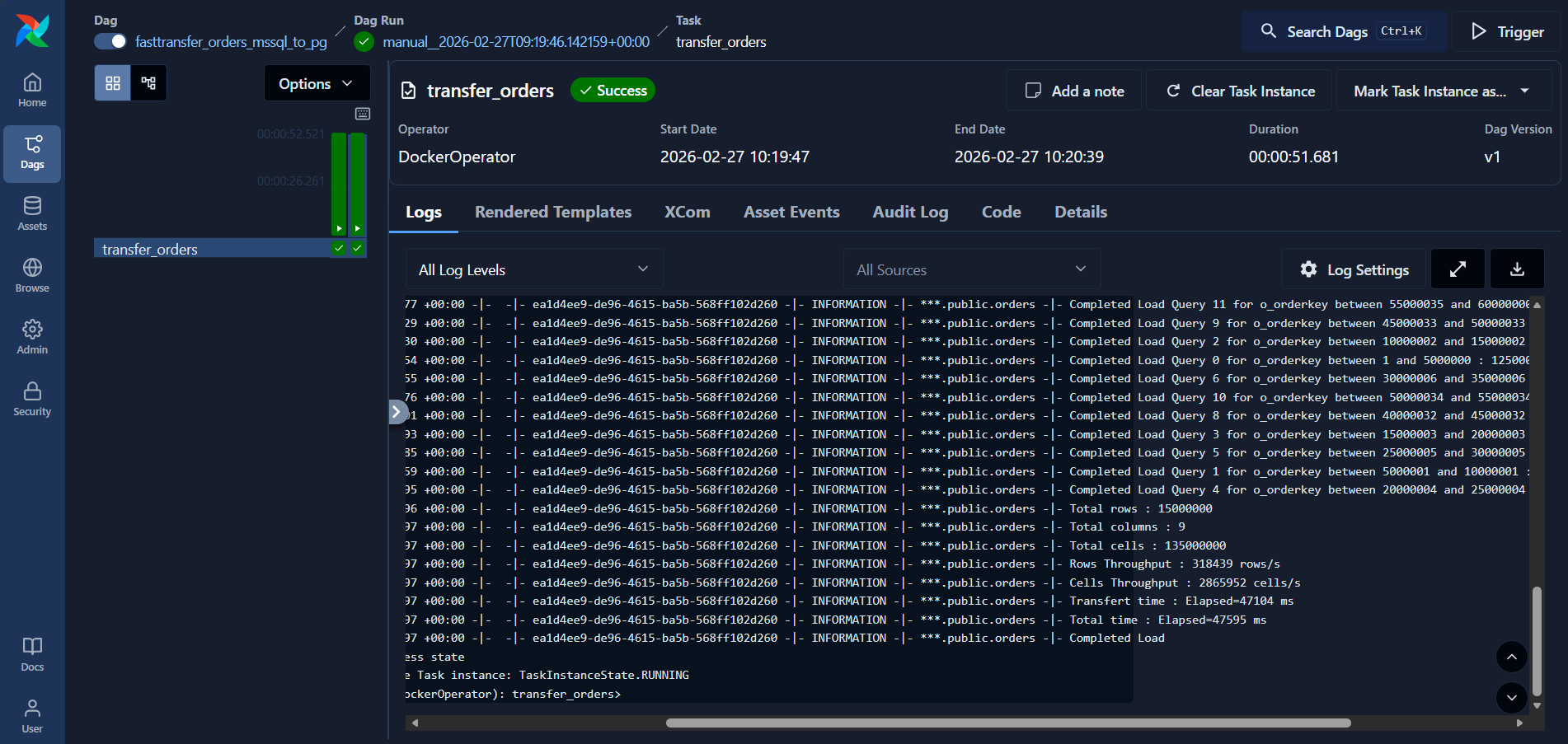

4. View Detailed Logs

Click on the task execution (green square) and then click the Logs tab to view detailed execution logs:

Example Log Output

The logs provide detailed information about the FastTransfer execution:

[2026-03-02 15:51:45] INFO - Running docker container: arpeio/fasttransfer:latest

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.375 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- The "FastTransfer_Settings.json" file does not exist. Using default settings. Console Only with loglevel=Information

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.377 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- FastTransfer - running in trial mode – trial mode will end on 2026‑03‑27 - normal licensed mode will then start (24 day(s) left).

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.377 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Starting

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.377 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- FastTransfer Version : 0.16.0.0 Architecture : X64 - Framework : .NET 8.0.24

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.377 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- OS : Debian GNU/Linux 13 (trixie)

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Process ID : 1

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Generated Run ID : ca195802-59f7-4b13-85e8-0bb73e8f7b32

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Source Connection Type : mssql

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Source SqlInstance : sql22,1433

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Source Database : tpch

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Source Trusted Connection : False

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Source User : migadmin

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Source Schema : tpch10

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Source Table : orders

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Target Type : pgcopy

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Target Server : host.docker.internal:5432

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Target Database : tpch

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Target Schema : tpch_1_copy

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Target Table : orders

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Target Trusted Connection : False

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Target User : pytabextract_pguser

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Columns Map Method : Name

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Degree : -4

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Distribute Method : Ntile

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Distribute Column : o_orderkey

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Bulkcopy Batch Size : 1048576

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Load Mode : Truncate

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.378 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Use Work Tables : False

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.379 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Encoding used : Unicode (UTF-8) - 65001 - utf-8

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.517 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Source Connection String : Data Source=sql22,1433;Initial Catalog=tpch;User ID=migadmin;Password=xxxxx;Connect Timeout=120;Encrypt=True;Trust Server Certificate=True;Application Name=FastTransfer;Application Intent=ReadOnly;Command Timeout=10800

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.517 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Target Connection String : Host=host.docker.internal;Port=5432;Database=tpch;Trust Server Certificate=True;Application Name=FastTransfer;Timeout=15;Command Timeout=10800;Username=pytabextract_pguser;Password=xxxxx

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.517 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Source Database Version : Microsoft SQL Server 2022 (RTM-CU2) (KB5023127) - 16.0.4015.1 (X64) Feb 27 2023 15:40:01 Copyright (C) 2022 Microsoft Corporation Developer Edition (64-bit) on Linux (Ubuntu 20.04.5 LTS) <X64>

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.517 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Target Database Version : PostgreSQL 15.15 (Ubuntu 15.15-1.pgdg22.04+1) on x86_64-pc-linux-gnu, compiled by gcc (Ubuntu 11.4.0-1ubuntu1~22.04.2) 11.4.0, 64-bit

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.634 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Degree of parallelism was computed to 5 (=> 20\4)

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.870 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Ntile DataSegments Computation Completed in 235 ms

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.872 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Start Loading Data using distribution method NTile

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.884 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Start Loading Data using distribution method NTile

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.891 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Start Loading Data using distribution method NTile

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.900 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Start Loading Data using distribution method NTile

[2026-03-02 15:51:45] INFO - 2026-03-02T15:51:45.911 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Start Loading Data using distribution method NTile

[2026-03-02 15:51:54] INFO - 2026-03-02T15:51:54.233 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Completed Load Query 3 for o_orderkey between 36000003 and 48000003 : 3000001 rows x 9 columns in 8360ms

[2026-03-02 15:51:54] INFO - 2026-03-02T15:51:54.288 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Completed Load Query 1 for o_orderkey between 12000001 and 24000001 : 3000001 rows x 9 columns in 8415ms

[2026-03-02 15:51:54] INFO - 2026-03-02T15:51:54.330 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Completed Load Query 4 for o_orderkey between 48000004 and 60000000 : 2999997 rows x 9 columns in 8458ms

[2026-03-02 15:51:54] INFO - 2026-03-02T15:51:54.349 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Completed Load Query 0 for o_orderkey between 1 and 12000000 : 3000000 rows x 9 columns in 8476ms

[2026-03-02 15:51:54] INFO - 2026-03-02T15:51:54.442 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Completed Load Query 2 for o_orderkey between 24000002 and 36000002 : 3000001 rows x 9 columns in 8569ms

[2026-03-02 15:51:54] INFO - 2026-03-02T15:51:54.443 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Total rows : 15000000

[2026-03-02 15:51:54] INFO - 2026-03-02T15:51:54.443 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Total columns : 9

[2026-03-02 15:51:54] INFO - 2026-03-02T15:51:54.443 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Total cells : 135000000

[2026-03-02 15:51:54] INFO - 2026-03-02T15:51:54.443 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Rows Throughput : 1702771 rows/s

[2026-03-02 15:51:54] INFO - 2026-03-02T15:51:54.443 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Cells Throughput : 15324949 cells/s

[2026-03-02 15:51:54] INFO - 2026-03-02T15:51:54.443 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Transfert time : Elapsed=8809 ms

[2026-03-02 15:51:54] INFO - 2026-03-02T15:51:54.443 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Total time : Elapsed=9135 ms

[2026-03-02 15:51:54] INFO - 2026-03-02T15:51:54.443 +00:00 -|- -|- ca195802-59f7-4b13-85e8-0bb73e8f7b32 -|- INFORMATION -|- tpch.tpch_1_copy.orders -|- Completed Load

[2026-03-02 15:51:54] INFO - Task instance in success state

[2026-03-02 15:51:54] INFO - Previous state of the Task instance: TaskInstanceState.RUNNING

[2026-03-02 15:51:54] INFO - Task operator:<Task(DockerOperator): transfer_orders>

Key metrics visible in the logs:

- Total rows transferred: 15,000,000 rows

- Throughput: ~1,702,771 rows/second

- Transfer time: ~9 seconds

- Parallel execution: 5 concurrent workers using the Ntile distribution method

Advanced Use Cases

Scheduled Daily Transfers

Add a schedule to run the transfer daily at 2 AM:

with DAG(

dag_id="fasttransfer_orders_mssql_to_pg",

start_date=datetime(2025, 1, 1),

schedule="0 2 * * *", # Daily at 2 AM

catchup=False,

tags=["fasttransfer", "mssql", "postgres", "docker"],

) as dag:

# ... tasks

Conclusion

Integrating FastTransfer with Apache Airflow provides a powerful combination: Airflow's industry-standard orchestration and monitoring capabilities paired with FastTransfer's high-performance parallel data transfers.

Whether you're building scheduled ETL pipelines, orchestrating complex multi-table migrations, or creating sophisticated data workflows with branching and error handling, this integration delivers the reliability, performance, and observability modern data teams need.

Want to try it on your own data? Download FastTransfer and get a free 30-day trial.

Resources

- FastTransfer: https://fasttransfer.arpe.io

- FastTransfer Docker Image: https://hub.docker.com/repository/docker/arpeio/fasttransfer

- FastTransfer Documentation: https://fasttransfer-docs.arpe.io/latest/

- FastTransfer Airflow Integration: https://fasttransfer-docs.arpe.io/latest/integration/apache-airflow

- Apache Airflow Documentation: https://airflow.apache.org/docs/

- Airflow Docker Provider: https://airflow.apache.org/docs/apache-airflow-providers-docker/stable/